Crowdsourced Factchecking

Join 73,000 newsletter subscribers who trust us to check the facts

Sign up to get weekly updates on politics, immigration, health and more.

Subscribe to weekly email newsletters from Full Fact for updates on politics, immigration, health and more. Our fact checks are free to read but not to produce, so you will also get occasional emails about fundraising and other ways you can help. You can unsubscribe at any time. For more information about how we use your data see our Privacy Policy.

In Summary

In the current climate of information overload, the demand for factchecking is increasing. Factcheckers are often small teams that struggle to keep up with that demand. In recent years, new organisations like WikiTribune have suggested crowdsourcing as an attractive and low-cost way for factchecking to scale.

Here’s my take. There are three components to crowdsourcing that you are trading off:

- Speed: how quickly the task can be done.

- Complexity: how difficult the task is to perform. The more complex the more oversight needed.

- Coverage: the number of topics or areas you can cover.

These exist in a trade-off triangle, so you can only optimise for two of these at a time — the other has to be sacrificed.

The difficulty with crowdsourced factchecking is that it could pretty much cover any topic, so you have to have coverage. And it’s a complex task, so you have to have complexity. And it needs to happen fast if it’s to be effective, so you need speed too.

Previous efforts have just ended up doing the work themselves, because otherwise they miss the boat. By a few weeks.

The most notable attempts at trialling crowdsourced factchecking are TruthSquad and FactcheckEU, and although neither of these projects have proven that crowdsourcing could help scale the core business of factchecking, they did show that there may be some value in the activities around it.

And now in more detail

High profile examples of crowdsourcing such as Quora, Stack Overflow and Wikipedia, that harness and gather collective knowledge have proven that large crowds can be used in meaningful ways for complex tasks over many topics. But the trade-off is speed.

Projects like mySociety’s Gender Balance (which asks users to identify the gender of politicians) and Democracy Club’s Candidate (which crowdsources information about election candidates) have shown that small crowds can have a big effect when it comes to simple tasks, done quickly. But the trade-off is coverage.

At Full Fact during the 2015 election we had 120 volunteers aid our media monitoring operation. They looked through the entire media output everyday and extracted the claims being made. The trade-off here was complexity of the task.

The only examples which have operated at both high levels of complexity, within short timeframes, and have tried to cover everything that might need factchecking are TruthSquad and FactcheckEU.

In 2010 the Omidyar Network funded TruthSquad, a pilot to crowdsource factchecking run in partnership with Factcheck.org. The primary goal of the project was to promote news literacy and public engagement, rather than to devise a scalable approach to factchecking.

Readers could rate claims, and then with the aid of a moderating journalist, factchecks were produced. In an online writeup, project lead Fabrice Florin concluded that:

“despite high levels of participation, we didn’t get as many useful links and reviews from our community as we had hoped. Our editorial team did much of the hard work to research factual evidence. (Two-thirds of story reviews and most links were posted by our staff.) Each quote represented up to two days of work from our editors, from start to finish. So this project turned out to be more labor-intensive than we thought[…]” —Fabrice Florin

The project was successful in engaging readers to take part in the factchecking process, however it didn’t prove itself a model for producing high quality factchecks at scale.

FactcheckEU was run as a factchecking endeavour across Europe by Alexios Mantzarlis. Alexios is the co-founder of Italian factchecking site Pagella Politica and now Director of the International Fact-Checking Network. In an interview he said:

“there was this inherent conflict between wanting to keep the quality of a factcheck high and wanting to get a lot of people involved. We probably erred on the side of keeping the quality of the content high and so we would review everything that hadn’t been submitted by a higher level fact checker.” — Alexios Mantzarlis

The Factchecking Process

To understand why crowdsourced factchecking hasn’t proven successful we have to (shock-horror) talk about the different stages of factchecking:

- Monitoring

- Spotting

- Checking

- Publishing

- The other bits

Monitoring and spotting

One of the first parts of the factchecking process is looking through newspapers, TV, social media etc to find claims that can be checked, and are worthy of being checked. The decision to check is often made by an editorial team, with key checks and balances in place.

If claims are submitted and decided upon by the crowd, how can you ensure the selection of claims is spread fairly across the political spectrum and are sourced from a spread of outlets?

Mantzarlis said that “interesting topics would get snatched up relatively fast.”What does that mean for the more mundane but still important claims? Will opposition parties always have a vested interest in factchecking those in power and will this skew the total set of factchecks produced?

Will well-resourced campaigns upvote the claims that best serves their purposes? What about the inherent skew of volunteering? The people who are more likely to donate their time have higher household incomes, what does this mean for selection bias?

Checking and publishing

The second stage encompasses the research and write up of the factcheck: primary sources and expert opinions are consulted to understand the claims, available data and their context.

Conclusions are synthesised and content is produced that explains the situation clearly and fairly. TruthSquad and FactcheckEU both discovered that this part of the process needed more oversight than they expected.

“We were at the peak a team of 3 […] We foolishly thought, and learnt the hard way, that harnessing the crowd was going to require fewer human resources, in fact it required, at least at the micro level, more.” — Alexios Mantzarlis

The back and forth of the editing process, which ensures a level of quality, was in this case also a key demotivator:

“where we really lost lost time, often multiple days, was in the back and forth, […] it either took more time to do that than to write it up ourselves, or they lost interest”. — Alexios Mantzarlis

The other bits

After research and production of the content, there are various activities which take place around the factcheck. These include asking for corrections to the record, promotion on social media or turning factchecks into different formats such as video. Mantzarlis noted:

“most of the success we got harnessing the crowd was actually in the translations. […] People were very happy to translate factchecks into other languages.” — Alexios Mantzarlis

While TruthSquad and FactcheckEU didn’t prove that crowdsourcing is useful for the research at the core of the factchecking process, they did show that it may yield benefit for the activities around it. This is explored more at the end.

The need for speed and the risk of manipulation

Phoebe Arnold, Head of Communications and Impact at Full Fact says “the quicker the factcheck is published the more likely we are to have an impact on the debate”.

Let’s take the case of ‘Live’ factchecking; researching and tweeting in real-time alongside a political debate or speech. At Full Fact only the most experienced factcheckers are trusted with this task, for fear of the reputational damage that an inaccurate factcheck may have.

Paul Bradshaw who ran the crowdsourced investigations website Help Me Investigate says “[crowdsourcing] suits long-running stories rather than breaking ones” — in the case of live factchecking, every sentence spoken by a politician during an electoral debate could be a breaking story and as claims are easily surfaced, crowdsourcing claims to check has little value.

However, not all factchecking happens at this extreme speed. But it does happen within hours, or a few days. I don’t know of many volunteer efforts that work at this speed with as many interactions between participants. I want to hear about them if they exist!

Even if somehow crowd-powered work gets to the point where they can be published, more work is needed to verify the accuracy prior to publication. In a report for the Reuters Institute, Johanna Vehkoo concludes

“Crowdsourcing is a method that is vulnerable to manipulation. It is possible for users to deliberately feed journalists false information, especially in quickly developing breaking-news situations. In an experiment such as the Guardian’s MPs’ Expenses a user had the possibility of smearing an MP by alleging misconduct where there wasn’t any. Therefore journalists need to take precautions, identify the risks, and perform rigorous fact-checking.”

TruthSquad, FactcheckEU and the MPs’ Expenses project all required a final factchecking step to ensure the quality of the information and to ensure that their legal obligations were met.

Is crowdsourced factchecking’s utility undermined if this is always necessary?

Primary Research

At Full Fact, and many other factchecking organisations, factchecking starts from primary sources. This is an important point.

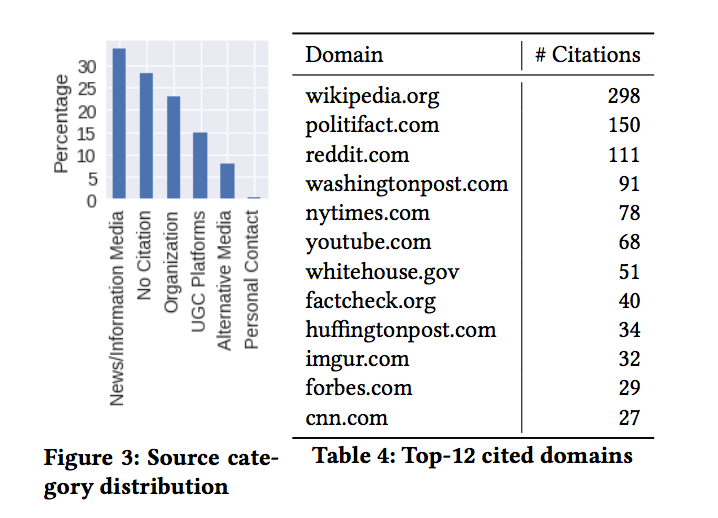

A recent analysis of Reddit’s political factchecking subreddit argued there is a future for crowd-powered factchecking and that this subreddit is a pretty good model. The authors claimed this approach could help build a sustainable model for factcheckers, but the data they collected shows that most of the citations in the factchecks produced didn’t come from primary sources.

Instead, 75% of the citations from the Top-12 sources came from secondary sources: factchecking organisations (including Politifact and Factcheck.org), news outlets and Wikipedia (which exclusively sources citations from secondary sources).

Crowdsourcing models that could work

If crowdsourcing of core factchecking activities either fails to surface novel information (as shown in the Reddit study) or happens at a slower pace, and requires substantial time to verify (as in TruthSquad and FactCheckEU), is there any hope for crowdsourcing to help combat misinformation? I want there to be.

The Tow Center for Digital Journalism has published a Guide to Crowdsourcing. They suggest tasks that might be suitable could include:

Voting — prioritising which stories reporters should tackle.

Witnessing — sharing what you saw during a breaking news event or natural catastrophe.

Sharing personal experiences — divulging what you know about your life experience. […]

Tapping specialised expertise — contributing data or unique knowledge. […]

Completing a task — volunteering time or skills to help create a news story.

Engaging audiences — joining in call-outs that range from informative to playful.

Despite its shortcomings in factchecking research activities, these examples show opportunities where crowdsourcing could be beneficial for other areas of factchecking — provided you can jump through the hoops of the trade-off triangle.

Submitting claims to check

Readers submit claims which they believe should be checked. The International Fact Checking Network’s code of principles encourages readers to send claims to factcheck. Although, as discussed, the onus is still on the factcheckers to choose a balanced set of claims to check, but this could broaden the visibility of claims across a range of closed media. This also starts to get at the problem of claims being shared in closed peer to peer networks.

Annotating datasets and extracting information in advance

Mechanical tasks, or semi-skilled tasks, like extracting information and placing them in standard formats, could support factcheckers. This is contingent on the aforementioned tradeoff between managing individuals’ contributions and the effort to verify these contributions, but the advantage here is that content can be verified ahead of when it is needed.

As more natural language processing and machine learning is applied to factchecking activities, there is a slight growth in this area of crowdsourcing. For example at Full Fact we collected 25,000 annotations from 80 volunteers for our automated claim detection project.

Curated or earned expertise

“The assumption that crowds are comprised of amateurs continues to permeate the popular press, but these assumptions do not appear to be coming true in anecdotal accounts or in the empirical research into crowdsourcing” — Crowdsourcing, Brabham, 2013

Already, some factcheckers use pre-vetted lists of experts who meet the standard required to support factchecking of certain topics. It is also possible that they could use the crowds to attract subject experts who can contribute to factchecks alongside factcheckers. The critical element here is being able to rely on that contribution. Young journalists, researchers, and individuals will need to prove that they are reputable over time. How this reputation could be collected or proven remains to be seen.

Spreading the correct information

During the 2016 EU referendum in the UK, thousands of members of 38 Degrees took part in a fact distribution network. They would take the results of factchecks and repost them on forums, social media groups, and share with friends and family. This gave us coverage into communities we wouldn’t have otherwise had.

Crowdsourcing as digital literacy

TruthSquad discovered that “amateurs learned valuable fact-checking skills by interacting with professionals”, and later said that a primary focus of crowdsourced factchecking was to teach skills rather than produce usable output.

Volunteering anywhere engenders a feeling of loyalty which — for factchecking organisations who rely on donations — is an attractive consequence.

So…

We’d be foolish to ignore a large and increasingly willing resource to help in the fight against misinformation. But that resource needs to be deployed intelligently if it’s to be effective.

It seems, so far at least, that the power of the crowd can‘t be harnessed in the research phase, where the burdens of accuracy, speed and complexity are too high, but in the activities around factchecking.

There also seems to be encouraging signs that by doing so we improve digital literacy, and therefore in the long view, might also help stop the spread of misinformation in its tracks.

This article has been cross-posted on Medium.