Why is TikTok penalising content designed to highlight misinformation?

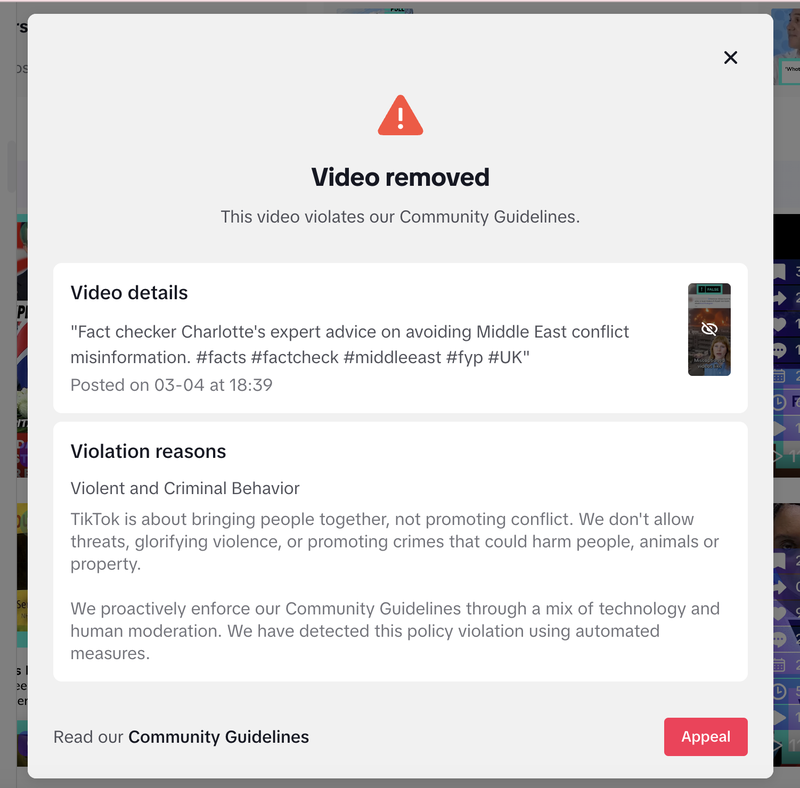

Recently, we received a community guidelines violation from TikTok on a video featuring one of our fact checkers, where she offered practical advice on how to avoid misinformation related to conflict in the Middle East. As a result, the video was removed and is no longer accessible to viewers.

It is ironic that a video aimed at reducing harm and encouraging critical thinking was flagged for “violent and criminal behaviour.” The violation note reads: “TikTok is about bringing people together, not promoting conflict. We don’t allow threats, glorifying violence or promoting crimes that could harm people, animals or property.”

We appealed the decision. The appeal was rejected.

According to TikTok, its community guidelines are enforced through “a mix of technology and human moderation.” In our case, they say the alleged violation was identified through automated systems.

Only after completing a basic “understanding the guidelines” quiz was the strike eventually removed and our account restored to good standing. While we’re relieved at the outcome, it raises serious concerns about how moderation systems are functioning.

Replacing the human with a machine

Over the past two years, TikTok has reportedly cut hundreds of UK roles within its trust and safety team, alongside removing entire moderation teams in markets such as the Netherlands and Berlin. This shift away from human oversight toward greater reliance on automated systems risks undermining the platform’s ability to effectively identify and respond to harmful misinformation.

Our experience points to a key weakness: over-reliance on automated moderation without sufficient contextual understanding. Our content was educationalYet the system appears to have flagged it based on keywords or themes alone, without meaningfully grasping our intent.

Join 73,000 newsletter subscribers who trust us to check the facts

Sign up to get weekly updates on politics, immigration, health and more.

Subscribe to weekly email newsletters from Full Fact for updates on politics, immigration, health and more. Our fact checks are free to read but not to produce, so you will also get occasional emails about fundraising and other ways you can help. You can unsubscribe at any time. For more information about how we use your data see our Privacy Policy.

TikTok claims it removes 99.3% of violating content, but the real issue lies in how those violations are defined. That figure may hold when content is clearly and indisputably in breach of its rules, but it becomes far less reassuring in cases of false positives (like ours), or where genuinely harmful content is not captured by their automated systems. Of course automated tools - artificial intelligence among them - can speed up the processes but these are nuanced and important judgements that require human moderation.

The implications are wider than one mistaken strike. If content aimed at countering misinformation or promoting media literacy is penalised, then we are left with a poorer information environment - where helpful, accurate content is suppressed, while platforms like TikTok continue to talk up their commitment to tackling misinformation.

An appeal that… didn’t appeal

Despite submitting what we believed was a surefire appeal, the decision was upheld without a meaningful explanation. We’ve been left with several questions; At any point did a human moderator review our video? On what grounds was the appeal rejected? And does this mean we’re effectively barred from creating content about any conflict, even when our goal is to build a better information environment?

We've asked TikTok to look into this. They've told us they will investigate our case and we look forward to hearing from them.