AI images, old videos and false viral claims: what to watch out for when checking posts about the Middle East on social media

In recent days we’ve seen a surge of misinformation on social media relating to the ongoing conflict in the Middle East.

We’ve identified at least 15 miscaptioned videos and eight AI-generated images that have had thousands of interactions between them, and we’ve written fact checks on 17 of the most viral posts, as listed below. But that’s just the tip of the iceberg, with fact checkers and verification experts around the world flagging dozens more.

It’s becoming increasingly common to see AI-generated content shared as if it is genuine in the wake of global news events. Even images which appear to be AI but don’t seem very convincing, such as those with visible watermarks (like these pictures supposedly showing US special forces being captured), have been shared at scale. And the sheer volume of this fake content and the ease with which it is generated is concerning.

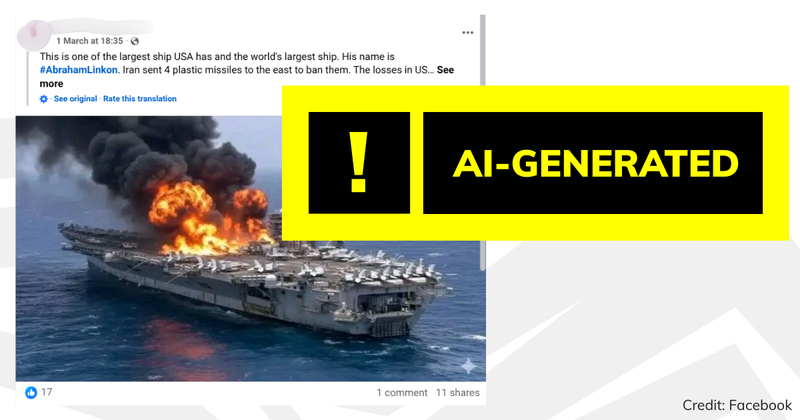

In recent days we’ve seen a fake image of Iran’s Supreme Leader Ayatollah Ali Khamenei buried in rubble, and AI images supposedly showing the Burj Khalifa and the USS Abraham Lincoln on fire.

In many cases though, the misinformation we’ve seen doesn’t rely on new technology, but on miscaptioned, recycled imagery.

Widely shared posts we saw at the start of this week shared a picture of the home secretary Shabana Mahmood at a Southport mosque in 2024, alongside claims she had observed a minute’s silence for Ayatollah Khamenei in Birmingham. She hadn’t.

Some of the videos we’ve seen are ones we’ve fact checked before during other conflicts.

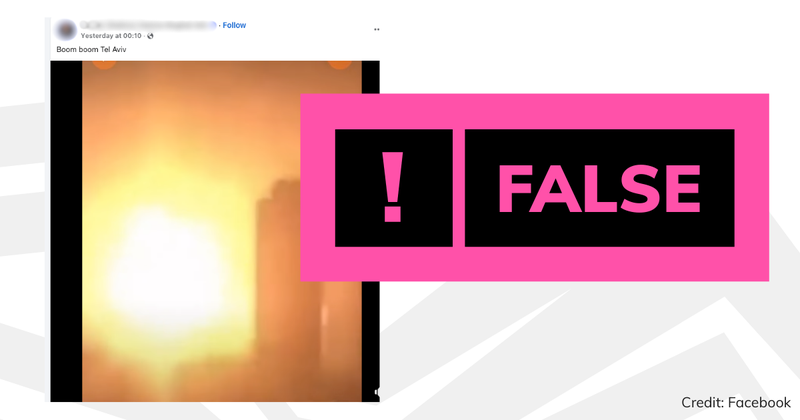

For instance, one striking video of explosions near a building, supposedly in the Israeli city Tel Aviv, actually shows a warehouse fire in China in 2015. That’s not the first time we’ve seen that specific clip shared with false claims it showed Tel Aviv—the same thing happened following Iran’s missile attack on Israel in October 2024. And before that, we’d seen the clip miscaptioned and shared following Russia’s invasion of Ukraine in 2022.

Old footage of football celebrations in Algiers that we’d previously fact checked in relation to Gaza has also recirculated, with false claims it showed Iranian attacks on Tel Aviv. And we’ve even seen a miscaptioned video, supposedly showing US bases in the Gulf under attack, which dates all the way back to the start of the Iraq war in 2003. That clip has gone viral with incorrect captions before too.

Other clips are far more recent, but still unrelated to the recent conflict. A video of a huge fireball shared with claims it shows the US embassy in Saudi Arabia being attacked has been online since at least 6 February this year, before the latest strikes began. Another video was shared with claims it showed an Israeli plane targeted at Ben Gurion Airport just before take off, but it actually shows an incident in the United States last year.

We’ve seen plenty of misinformation related to the attacks on Dubai in recent days too. For example, footage of 2015 apartment fire in a neighbouring city and an old video from 2024 were shared with false claims they show missile strikes on the UAE.

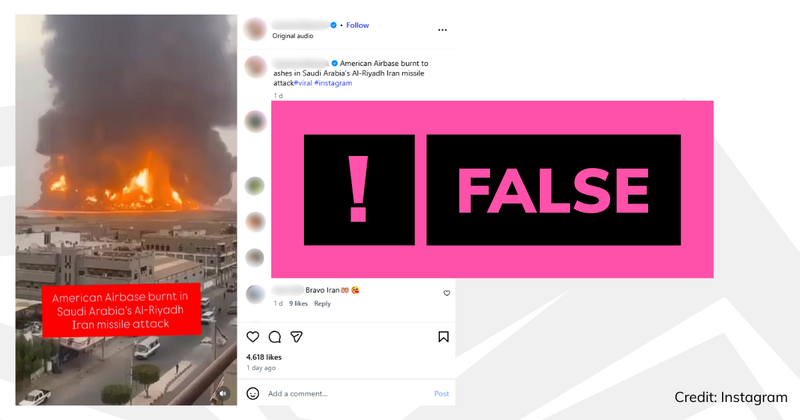

Another clip has been posted with false claims it shows a US airbase in Saudi Arabia “burnt to ashes” after an Iranian missile attack. Using features visible in the video like a unique roof saying “unicef”, the panels on the roof of the next door building and patches of greenery, we matched it to satellite images of a road in a Yemeni port, where it was filmed in 2024.

Join 73,000 newsletter subscribers who trust us to check the facts

Sign up to get weekly updates on politics, immigration, health and more.

Subscribe to weekly email newsletters from Full Fact for updates on politics, immigration, health and more. Our fact checks are free to read but not to produce, so you will also get occasional emails about fundraising and other ways you can help. You can unsubscribe at any time. For more information about how we use your data see our Privacy Policy.

How to fact check online claims yourself

As we always say, during unfolding global events it’s essential to consider whether what you see online is accurate, so you can avoid sharing misleading information.

If you are suspicious of an image or video online, but not certain it’s misleading, one of the best options is to do a reverse image search to see if it has appeared online before.

When using Google’s reverse image search function, you can click “About this image” to see if a picture has been flagged as “Made with Google AI”. If it has been, this means the image contains an embedded SynthID digital watermark that is not visible to the human eye, but can be used to identify content which has been created—or at least altered—by Google’s AI tools. (A lack of SynthID doesn’t prove the picture wasn’t made with AI, however).

Some AI tools may leave visible watermarks, such as the logos of Sora (the text-to-video generator from OpenAI, which created ChatGPT), Gemini or Grok. This can give us strong evidence that even the most convincing content isn’t genuine.

But it’s worth remembering these watermarks can easily be cropped away before an image is shared and that content can also often be generated without a watermark.

We’ve many more tips in our guides on how to spot misleading images and videos. We’ve also created a toolkit to help identify misinformation, and written about how to spot AI-generated images, videos and audio.

17 viral Middle East conflict posts we’ve fact checked so far

- A video of explosions near a building which actually shows a 2015 incident in China, not an attack on Tel Aviv

- A fake image of the Burj Khalifa burning, which was created or altered using AI

- False claims that the home secretary took part in a minute’s silence for Ayatollah Khamanei

- A fake image of the Ayatollah Khamanei buried in rubble, which was created or altered using AI

- Old footage of a fire after an Israeli airstrike on Yemen which has been shared with false claims it shows a US airbase in Saudi Arabia

- A video shared with claims it shows people evacuating an Israeli plane at Ben Gurion airport—it was actually filmed in the US last year

- An AI-generated image supposedly showing the USS Abraham Lincoln aircraft carrier on fire

- A video of a large fireball, which predates the current conflict but was shared with claims it shows an attack on the US embassy in Saudi Arabia

- A video of a missile strike dating back to at least 2024 which has been shared with claims it shows an Iranian missile strike on Dubai

- Footage of a 2015 apartment fire which has been shared with claims it shows Iran attacking Dubai

- Footage of a fire in Glasgow which the xAI chatbot Grok wrongly claimed showed a blaze in Tel Aviv

- A fake image of a funeral of Iranian schoolchildren killed in a missile strike which was widely shared on social media, including by the Your Party MP Zarah Sultana

- AI-generated images supposedly showing US soldiers “captured by Iran”

- A video of a US B-2 Spirit stealth bomber flying past a US ship in the Persian Gulf that was digitally created

- A fake image of a US B-2 supposedly “shot down” by Iran

- Footage of a Russian attack on Kyiv shared with false claims it shows an Iranian drone strike on Bahrain

- A video of a house fire in the US shared with false claims it depicts the aftermath of an Iranian missile strike on the home of the brother of Israel’s Prime Minister

Update 13 March 2026

This article was updated to include another seven fact checks we have published on the Middle East conflict.